Pixel-based Facial Expression Synthesis

Arbish Akram, Nazar Khan

25th International Conference on Pattern Recognition (ICPR2020)

Abstract

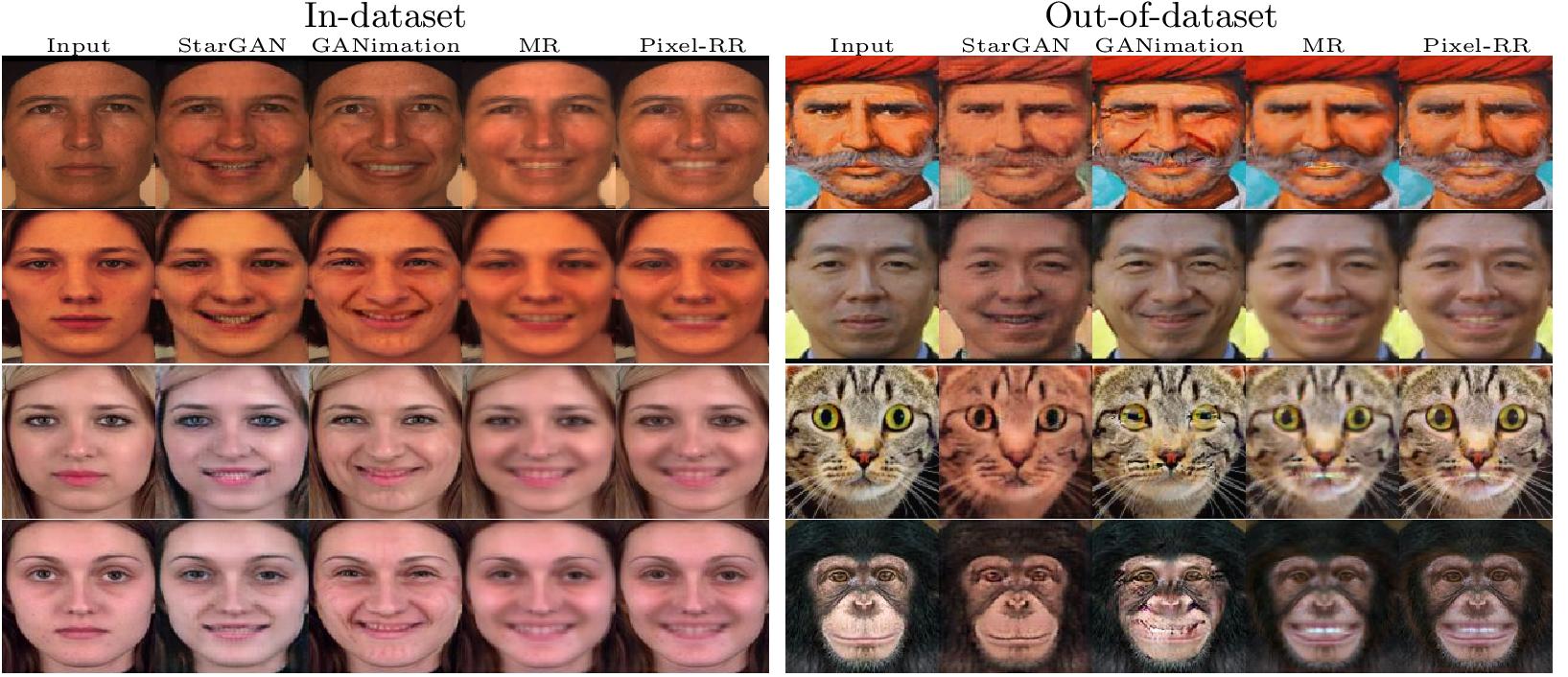

Facial expression synthesis has achieved remarkable advances with the advent of Generative Adversarial Networks (GANs). However, GAN-based approaches mostly generate photo-realistic results as long as the testing data distribution is close to the training data distribution. The quality of GAN results significantly degrades when testing images are from a slightly different distribution. Moreover, recent work has shown that facial expressions can be synthesized by changing localized face regions. In this work, we propose a pixel-based facial expression synthesis method in which each output pixel observes only one input pixel. The proposed method achieves good generalization capability by leveraging only a few hundred training images. Experimental results demonstrate that the proposed method performs comparably well against state-of-the-art GANs on indataset images and significantly better on out-of-dataset images. In addition, the proposed model is two orders of magnitude smaller which makes it suitable for deployment on resource constrained devices.

Presentation Video

Files

|

|

|

| Paper | Presentation | Poster |

Bibtex

@inproceedings{akram2021-pixel_fes,

author = {Akram, Arbish and Khan, Nazar},

title = {{Pixel-based Facial Expression Synthesis}},

booktitle = {International Conference on Pattern Recognition (ICPR)},

month = {January},

year = {2021}

}

|